CPU vs. GPU vs. NPU: How They Work Together

Your laptop fans scream during a simple video call because an overworked processor is struggling to blur your background while managing a dozen open browser tabs. This inefficiency directly impacts your battery life and the responsiveness of every click you make.

For years, consumers focused only on the single central brain of their devices, but modern hardware now relies on a specialized team of three distinct engines. Choosing a new computer today requires looking beyond raw clock speeds to see how hardware handles specific data types.

Key Takeaways

- The CPU remains essential for managing the operating system and performing serial tasks that require complex logic and immediate decision making.

- GPUs excel at parallel processing, using thousands of small cores to handle visual rendering and heavy data simulations that would overwhelm a standard processor.

- NPUs are specialized engines designed specifically for matrix multiplication, allowing them to run AI tasks like noise cancellation or facial recognition with very low power.

- Unified Memory Architecture allows the CPU, GPU, and NPU to share the same data pool, which eliminates the need to copy information between different chips.

- On-device AI processing via the NPU enhances user privacy and reduces latency by keeping sensitive data on the local hardware instead of sending it to the cloud.

Fundamental Architectures

Modern computing power relies on a strategic division of labor rather than a single, all powerful chip. By distributing tasks according to their complexity and structure, a system maintains speed without wasting energy.

This architecture separates general logic from massive data crunching, allowing each component to operate where it is most effective.

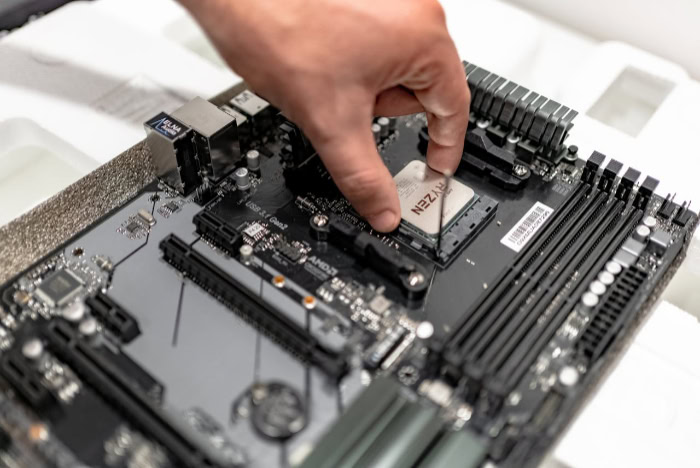

The CPU: The Executive Processor

The Central Processing Unit serves as the primary director of the system, acting much like a high level executive. It is designed for serial processing, meaning it handles one task after another with extreme speed.

Its strength lies in complex branch prediction, where the chip anticipates the next steps in a program to reduce waiting times. This makes the CPU indispensable for the unpredictable nature of running an operating system or managing the various logic gates of a standard application.

The GPU: The Parallel Workhorse

Graphics Processing Units take a different approach by prioritizing volume over individual speed. While a CPU might have eight or sixteen powerful cores, a GPU contains thousands of smaller, simpler cores.

These cores work in parallel to perform many identical calculations at once. This structure was originally built to calculate the color and position of millions of pixels on a screen, but it has since become the primary engine for any task that can be broken down into massive, simultaneous workloads.

The NPU: The Dedicated AI Specialist

Neural Processing Units are the newest members of the processing family, designed with a narrow but intense focus on machine learning. While a CPU or GPU can technically perform AI calculations, the NPU is physically wired to handle the specific mathematical structures of neural networks.

It focuses almost entirely on matrix multiplication and accumulation. By stripping away the hardware needed for general logic or graphics rendering, the NPU performs AI tasks with a fraction of the energy required by other chips.

Processing Logic

The way a processor handles data defines its mathematical logic and its ultimate speed. Data types range from single numbers to massive multidimensional grids; each processing unit uses a different strategy to resolve these equations.

Scalar Processing for Precise Logic

Scalar processing is the foundation of traditional computing, where the CPU operates on single data points one at a time. This method is highly effective for high precision tasks and system management where the next step depends on the result of the current one.

Because these tasks are often linear and require complex decision making, the scalar approach ensures that the logic remains sound and the system remains stable.

Vector Processing for Graphics

Vector processing allows a single command to affect an entire list of data points simultaneously. This is the primary language of the GPU.

When a piece of software needs to move an object in a 3D space, every vertex of that object must be updated using the same mathematical formula. Instead of calculating each point individually, vector processing applies the formula to the whole group at once.

This significantly increases the speed of visual rendering and data heavy simulations.

Tensor and Matrix Processing for Neural Networks

Tensor processing is the most advanced form of data handling for artificial intelligence. NPUs are designed to process tensors, which are essentially complex grids of numbers that represent the layers of a neural network.

By using dedicated hardware to streamline “multiply-accumulate” operations, the NPU can process these massive matrices in a single pass. This bypasses the overhead associated with general purpose hardware, allowing a device to recognize a face or translate text almost instantly.

Practical Application

In daily use, the invisible hand of the operating system assigns tasks to the most suitable hardware. While a user might only see a finished video or a snappy spreadsheet, the background distribution of these workloads is what prevents a device from freezing.

Operating System and Productivity Management

Standard productivity involves a high volume of small, unpredictable decisions. Opening a file, typing in a document, or browsing the web requires the CPU to manage thousands of tiny, varied instructions.

These tasks do not benefit from parallel processing because they are highly dependent on user input and immediate logical feedback. The CPU remains the primary driver for these actions, ensuring that the system feels responsive and fluid.

Visual and Computational Production

When a task requires rendering 3D environments or exporting high definition video, the system shifts the load to the GPU. These workloads involve consistent, repetitive calculations that would overwhelm a CPU.

In modern gaming, the GPU handles the lighting, shadows, and physics of the environment. Similarly, in professional video editing, the GPU manages the heavy lifting of color grading and effects, freeing up the CPU to keep the rest of the application running.

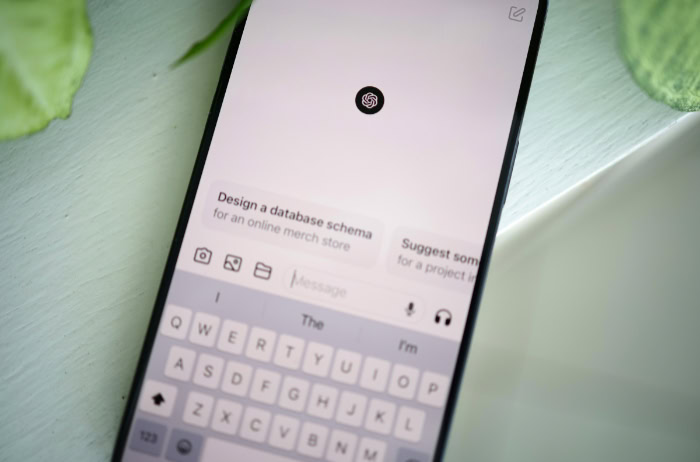

Real Time AI Inference

Artificial intelligence tasks operate differently than traditional software because they rely on patterns rather than rigid logic. The NPU takes over for background features that require constant, real time analysis.

This includes noise cancellation during a video call, real time image enhancement in a camera app, and biometric security like face or fingerprint recognition. By moving these tasks to the NPU, the device can provide these features without draining the battery or slowing down other active programs.

Power, Efficiency, and Throughput

Success in hardware performance is no longer defined by a single number. While speed remains important, the relationship between work completed and energy consumed has become the primary metric for modern devices.

Clock Speed and Instructions Per Clock

The most common way to judge a CPU is by its clock speed, measured in Gigahertz. This represents how many cycles the processor completes per second.

However, clock speed alone does not tell the whole story. Instructions Per Clock (IPC) measures how much actual work is done during each of those cycles.

A modern CPU with a lower clock speed but a higher IPC can often outperform an older chip with a higher frequency, making efficiency just as important as raw speed.

Throughput and Efficiency Metrics

Throughput measures how much total work a processor can complete in a set time, which is the primary metric for GPUs and NPUs. For a GPU, this is often measured in Teraflops, representing trillions of floating point operations per second.

For an NPU, the metric shifts to TOPS, or trillions of operations per second. While Teraflops prioritize the sheer volume of data moved, TOPS focus on the efficiency of AI specific calculations, showing how much intelligence a chip can provide per watt of power.

Thermal and Battery Sustainability

Modern hardware must balance performance against the physical limits of heat and battery life. If a GPU handles an AI task, it may finish quickly but will generate significant heat and consume a large amount of power.

The NPU is designed to achieve the same or better AI performance while using significantly less energy. This thermal balance is what allows a smartphone to perform complex image processing without becoming hot to the touch or running out of battery before the end of the day.

The Integrated Ecosystem

Modern devices rarely use separate, isolated chips for these functions; instead, they combine them into a single System on a Chip. This physical proximity allows the different processors to work as a unified team.

By sharing resources and communicating instantly, these components create a more responsive and secure user experience.

Hardware Scheduling and Task Distribution

Operating systems use sophisticated scheduling logic to decide which processor handles a request. This happens in real time, moving data between the CPU, GPU, and NPU based on the current priority.

If you are playing a game while a background AI process filters your microphone, the scheduler ensures the GPU gets the resources for the graphics while the NPU manages the audio. This constant coordination prevents any single unit from becoming a bottleneck.

Shared Memory and Bandwidth Efficiency

Unified Memory Architecture is a significant advancement in chip design that allows the CPU, GPU, and NPU to access the same pool of high speed memory. In older systems, data had to be copied from one chip’s memory to another, which created delays and wasted power.

With a unified approach, all three units can see and use the same data simultaneously. This increases bandwidth efficiency and allows for much faster transitions between different types of tasks.

Local Processing and User Privacy

Moving AI tasks to a local NPU provides benefits beyond just speed. When a device processes a voice command or a facial scan locally, that data never needs to leave the device for a cloud server.

This on device inference reduces latency, as there is no need to wait for a round trip to a data center. More importantly, it enhances privacy by keeping sensitive personal information stored and processed entirely within the local hardware ecosystem.

Conclusion

The hierarchy of computer hardware has shifted from a single dominant unit to a collaborative ecosystem. The CPU acts as the brain of the machine, handling logic and making decisions.

The GPU provides the muscle, crunching through visual data and massive parallel sets. Meanwhile, the NPU functions as a reflex, responding instantly to AI patterns with minimal energy.

This synergy ensures that modern devices can handle high-intensity workloads while remaining cool and efficient. A balanced system does not rely on the strength of one component, but rather on how these three distinct engines work together to create a seamless experience.

Frequently Asked Questions

Do I really need an NPU if I already have a fast GPU?

A dedicated NPU is valuable because it handles AI tasks with significantly better energy efficiency than a GPU. While a GPU can technically perform AI calculations, it consumes much more power and generates more heat. Using an NPU preserves your battery life while keeping your device cool during background AI tasks.

Why does my computer still rely on a CPU for basic browsing?

Web browsing and standard application logic are serial tasks that require the high precision and complex branch prediction of a CPU. These activities do not benefit from the parallel structure of a GPU or the matrix focus of an NPU. The CPU remains the most effective engine for managing these unpredictable user inputs.

Can a good NPU make my games run at higher frame rates?

An NPU will generally not increase your raw frame rates, as that remains the primary responsibility of the GPU. However, an NPU can improve the overall gaming experience by handling background AI features like voice-chat noise suppression. This allows the GPU to focus entirely on rendering the game world without distractions.

Does having these extra processors make my device more expensive?

While adding specialized hardware can increase manufacturing costs, the efficiency gains often lead to a better overall value through improved battery life and longevity. Most modern systems integrate these units into a single chip. This integration reduces the total number of components needed while significantly boosting the performance of modern software.

Will my data be safer if my laptop has an NPU?

Having a local NPU improves your privacy by allowing AI models to process sensitive data directly on your device hardware. Instead of sending your voice recordings or biometric scans to a remote cloud server, the NPU handles the analysis locally. This ensures your personal information stays on your machine and reduces security risks.