Dual Channel vs. Quad Channel: Is More Always Better?

Your computer’s performance often hinges on a hardware bottleneck that most users overlook during their initial build. These communication paths, known as memory channels, act as the data highway between your RAM and the processor.

In this analogy, adding more channels is like adding lanes to a freeway. A dual-lane road allows for twice the traffic of a single path, while a four-lane expressway provides the massive throughput required for heavy-duty workloads.

However, simply plugging in more sticks of memory does not always translate to faster speeds. The hardware you choose dictates the limits of your system’s potential.

Key Takeaways

- Dual channel memory doubles theoretical bandwidth by using two 64-bit data paths simultaneously.

- Quad channel configurations require specific high end desktop processors and motherboards to function.

- Standard consumer CPUs are architecturally limited to dual channel even if four slots are present on the board.

- DDR5 introduces sub-channels that improve efficiency but do not change the processor’s native channel count.

- Two memory sticks are generally more stable and easier to overclock than four sticks on mainstream motherboards.

Understanding Architecture: How Channels Work

The relationship between the processor and the system memory is defined by the number of active communication lines available. These paths determine how much data moves back and forth during every clock cycle.

Without a proper understanding of how these lanes function, it is easy to assume that more RAM sticks always lead to more speed. In reality, the architecture of your processor dictates the maximum amount of data that can be processed at once, regardless of the amount of memory installed.

The Role of the Integrated Memory Controller

Every modern processor contains an Integrated Memory Controller, or IMC. This hardware component serves as the gatekeeper, managing the flow of data between the system memory and the CPU cores.

The IMC is hardwired to support a specific number of channels. If a processor is designed for dual-channel operation, adding more memory modules will not increase the number of paths; it simply adds more capacity to the existing lanes.

The efficiency of this controller determines how well the system handles high-speed data transfers without data corruption or lag.

Single vs. Multi-Channel Configurations

A single channel of memory utilizes a 64-bit data path. When a system operates in single-channel mode, all data must travel through this lone corridor.

Dual-channel configurations utilize two 64-bit paths to create a 128-bit total interface, allowing the CPU to access two modules simultaneously. Quad-channel setups expand this further, using four parallel paths for a 256-bit total.

This parallelism effectively multiplies the theoretical amount of data that can be transferred every second, providing a wider pipe for information to travel through.

Bandwidth vs. Latency

It is important to distinguish between bandwidth and latency, as they affect performance in different ways. Bandwidth refers to the total volume of data that can be moved at once, much like the width of a pipe.

Adding channels increases this volume. Latency, however, is the delay between a command being issued and the data being delivered.

While multi-channel setups significantly boost bandwidth, they generally do not lower latency. A system might move massive amounts of data at once but still experience a slight delay in starting that transfer, which is why high bandwidth does not always improve every type of software.

Hardware Compatibility and Requirements

Hardware limitations often prevent users from reaching the highest possible memory speeds. Even if you purchase the fastest RAM available, your motherboard and CPU must be physically and architecturally capable of supporting multi-channel configurations.

Compatibility is not just about having enough physical slots on the board; it is about the internal wiring and the specific capabilities of the silicon inside your machine.

Consumer vs. Workstation Platforms

Most consumer-grade hardware, such as the standard Intel Core or AMD Ryzen series, is built on a dual-channel architecture. These platforms prioritize cost-efficiency and power management for everyday tasks and gaming.

In contrast, High-End Desktop (HEDT) or workstation platforms, like AMD Threadripper or Intel Xeon, are designed for quad-channel or even octa-channel support. These professional systems feature more pins on the CPU and complex motherboard traces to handle the massive data requirements of heavy industrial applications.

Physical Slots and Motherboard Layouts

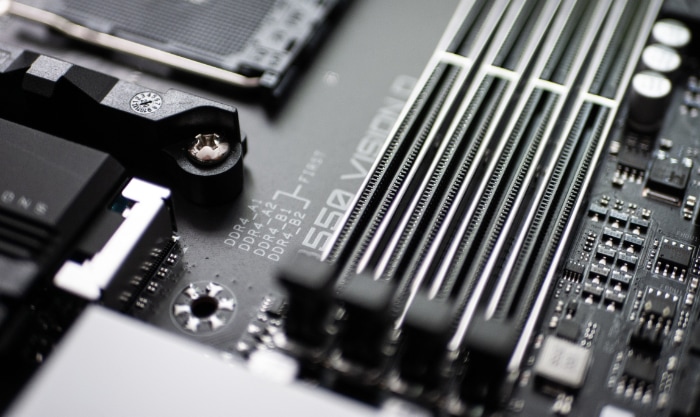

A common misconception is that a motherboard with four DIMM slots automatically supports quad-channel memory. On most mainstream boards, those four slots are still connected to only two channels.

Slots are often color-coded to show users which ones to populate first to enable dual-channel mode. Placing two sticks in the wrong slots can force the system into single-channel operation, cutting potential bandwidth in half.

Proper population according to the manual is required to ensure the CPU can access both channels effectively.

CPU Pin Count and Design

The physical connection between the CPU and the motherboard is a limiting factor for channel support. Every memory channel requires a specific number of pins on the CPU socket to communicate with the RAM.

This is why workstation processors are physically much larger than consumer chips. A processor with a lower pin count simply lacks the physical wiring necessary to talk to four independent memory channels at once.

The internal design of the CPU must also have enough logic gates within the memory controller to manage the synchronization of multiple data streams simultaneously.

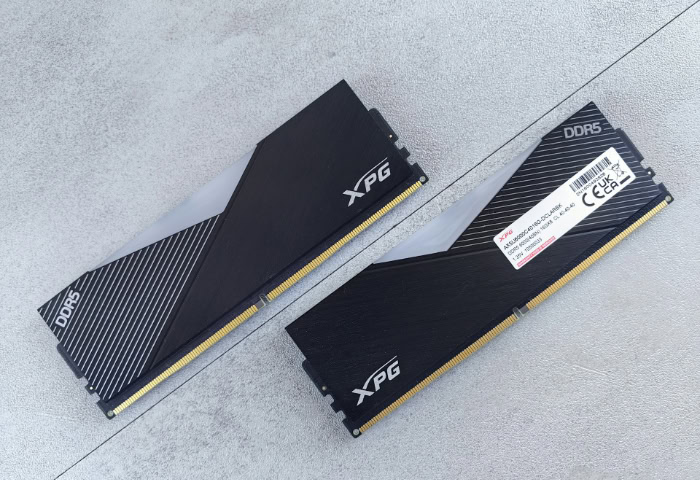

The DDR5 Evolution: Sub-Channels and Reporting

The release of DDR5 memory introduced a significant shift in how memory channels are structured and reported. Unlike older standards, DDR5 was designed to improve efficiency by splitting the workload within each individual stick of RAM.

This change has led to some confusion among users who rely on monitoring software to check their system specs, as the numbers reported often seem to contradict the physical hardware installed.

Internal Architecture of DDR5

A standard DDR4 module operates as a single 64-bit channel. DDR5 changes this by splitting that 64-bit path into two independent 32-bit sub-channels per module.

This does not double the total width, but it allows the memory to handle two smaller tasks at the same time instead of waiting for one large task to finish. This architectural tweak reduces the time the memory spends waiting and improves the overall efficiency of data retrieval, especially in modern multi-threaded applications.

Why Software Reports Quad Channel

Because each DDR5 module contains two sub-channels, a system with two sticks of DDR5 is technically running four independent 32-bit paths. Popular diagnostic tools often detect these four sub-channels and display “Quad Channel” in the interface.

This reporting is technically accurate from a logical perspective, but it can be misleading. It does not mean a consumer PC has suddenly gained the massive 256-bit bus of a workstation; it simply means the two 64-bit channels are being managed in smaller increments.

Theoretical Gains vs. Practical Performance

While the sub-channel design of DDR5 offers a clear upgrade over DDR4, it is not a replacement for a true HEDT quad-channel setup. A mainstream DDR5 system still relies on a dual-channel memory controller.

The benefit of DDR5 comes from its ability to use its available bandwidth more effectively, rather than simply having a wider path. Professional workstations with true quad-channel support still hold a massive advantage in raw data throughput because they use four full 64-bit paths, providing double the total width of a high-end consumer DDR5 build.

Performance Impact and Practical Use Cases

The practical benefit of adding memory channels depends heavily on the specific tasks a computer performs. While a massive increase in theoretical bandwidth sounds beneficial, many software applications are unable to utilize the extra data throughput.

Gaming and Everyday Computing

Most modern games see diminishing returns when moving beyond a dual-channel configuration. Because gaming is more sensitive to the speed of data access rather than the total volume of data being moved, the frequency of the RAM (measured in MHz or MT/s) plays a more significant role in frame rates than the number of channels.

For the average user browsing the web, streaming video, or playing the latest titles, the 128-bit bus of a dual-channel system provides more than enough room for the processor to function without hitting a bottleneck.

Professional and Data-Intensive Tasks

Quad-channel memory truly shines in environments where massive datasets are processed simultaneously. In 4K and 8K video editing, the system must move enormous files through the memory as you scrub through a timeline or render a final project.

Similarly, scientific simulations, virtual machine hosting, and complex 3D rendering benefit from the 256-bit bus provided by workstation platforms. These tasks can saturate standard dual-channel bandwidth, making the extra lanes of a quad-channel setup essential for reducing wait times and improving productivity.

Performance Gains for Integrated Graphics

Systems that lack a dedicated graphics card rely on the CPU’s integrated graphics (iGPU) for all visual processing. Unlike a separate video card that has its own high-speed VRAM, an iGPU must share the system’s main RAM.

In these scenarios, memory bandwidth is the primary factor limiting graphical performance. Moving from single-channel to dual-channel memory can often double the frame rates in games for an iGPU user.

While quad-channel would offer even more, it is rarely found on the budget-friendly platforms that typically rely on integrated graphics.

Practical Implementation: Two Sticks vs. Four Sticks

Deciding between using two or four sticks of RAM involves more than just reaching a target capacity. The physical arrangement of memory modules on the motherboard has a direct impact on the electrical signal quality and the overall stability of the computer.

While filling every available slot might look aesthetically pleasing, it introduces technical challenges that can limit the maximum speed of the memory or cause unexpected system failures.

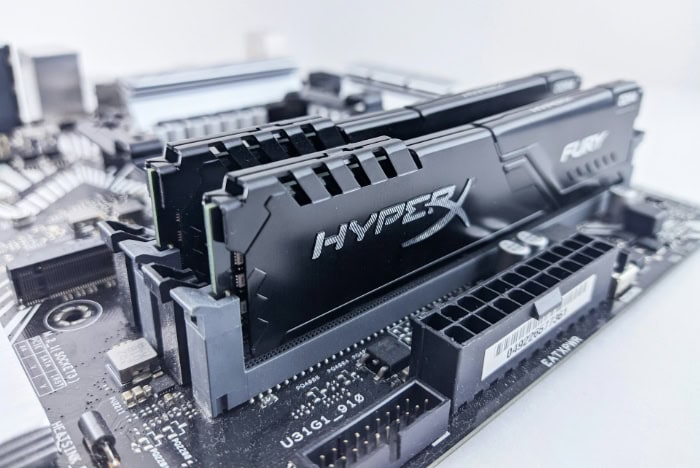

Stability and Overclocking Considerations

Populating all four slots on a motherboard places significantly more strain on the Integrated Memory Controller. The processor must manage twice as many electrical signals, which often leads to timing issues or reduced signal integrity.

Because of this, it is often much harder to achieve high overclocked speeds (like those found in XMP or EXPO profiles) when using four sticks instead of two. For users seeking the highest possible frequencies and the most stable experience, using two high-capacity sticks is almost always preferable to using four smaller ones.

Motherboard Trace Layouts: Daisy Chain vs. T-Topology

The way the copper traces are laid out on a motherboard determines how well it handles different memory configurations. Most modern consumer motherboards use a “Daisy Chain” layout, which optimizes the signal for just two sticks of RAM.

In this design, the signal quality drops if all four slots are filled. Older or specialized motherboards sometimes used “T-Topology,” which ran traces of equal length to all four slots to improve stability for full configurations.

However, since Daisy Chain is now the standard, using only two sticks is the best way to ensure the CPU receives a clean, high-speed signal.

Using Validated and Matched Kits

To ensure a system remains stable, all installed memory modules must be identical in their timings, voltages, and internal components. Manufacturers sell memory in matched kits that have been tested to work together at specific speeds.

Mixing two different kits, even if they are the same model from the same brand, can lead to system crashes or a failure to boot. The internal chips on RAM sticks can change between manufacturing batches, so purchasing a single validated kit that contains the total amount of memory you need is the only way to guarantee multi-channel synchronization.

Conclusion

The choice between dual and quad channel memory relies on the balance between your platform’s physical design and your specific data needs. Dual channel remains the standard for most users because it provides the right amount of bandwidth for gaming and productivity without the high cost of workstation hardware.

Professional users who work with massive datasets or complex simulations should prioritize quad-channel platforms to avoid hardware bottlenecks. Matching your memory configuration to your motherboard’s native architecture ensures the best possible stability and performance.

Frequently Asked Questions

Does having four RAM slots mean I have quad channel?

No, most consumer motherboards with four slots still only support dual channel operation. The extra slots are typically wired in pairs to the same two channels. To achieve true quad channel performance, you must use a high-end desktop platform like AMD Threadripper or Intel Xeon that supports the wider 256-bit bus.

Can I mix different RAM brands in a dual channel setup?

While mixing brands might work, it is not recommended for system stability. Different manufacturers use various internal components and timings that may conflict when synchronized. For the best results, always purchase a single validated kit of identical modules to ensure the memory controller can manage the data flow without errors.

Does quad channel memory improve gaming performance?

For the majority of modern games, quad channel memory offers very little benefit over a dual channel setup. Gaming performance relies more on low latency and high memory frequency than raw bandwidth volume. Most titles are designed to run efficiently within the bandwidth limits of standard consumer hardware and dual channel configurations.

Why does CPU-Z say I have quad channel with only two DDR5 sticks?

DDR5 modules are designed with two independent 32-bit sub channels per stick. Because of this architectural change, diagnostic software sees four active sub channels when two sticks are installed and reports it as quad channel. This is a logical distinction rather than a physical change to the processor’s total memory bus width.

Is it better to use two 16GB sticks or four 8GB sticks?

Using two 16GB sticks is generally better for system stability and speed. Populating all four slots on a mainstream motherboard increases the electrical load on the memory controller. This extra strain can prevent the RAM from reaching its rated speeds and may lead to crashes during intensive tasks or gaming sessions.